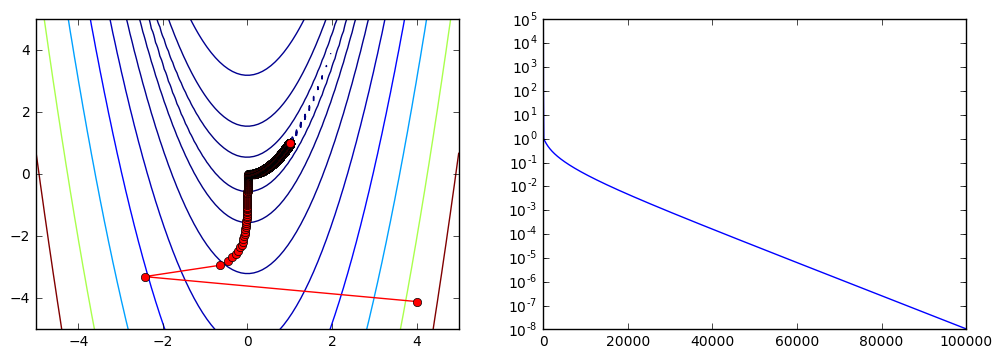

Minimization Rosenbrock function using Newton's method with jacobian and hessian matrix: optimize.minimize(optimize.rosen, This is the hessian matrix of the Rosenbrock function, calculated with Maxima: rosen_d2:, x), diff(rosen_d, y)], Newton's method uses the first and the second derivative (jacobian and hessian) of the objective function in each iteration. Message: 'CONVERGENCE: NORM_OF_PROJECTED_GRADIENT_<=_PGTOL' The SciPy library has three built-in methods for scalar minimization: brent is an implementation of Brent’s. However, minimizescalar() has a method keyword argument that you can specify to control the solver that’s used for the optimization. Concretely, the Scipy implementation is L-BFGS-B, which can handle box constraints using the bounds argument: optimize.minimize(optimize.rosen, In these cases, minimizescalar() is not guaranteed to find the global minimum of the function. where x is a vector of one or more variables. In general, the optimization problems are of the form: minimize f (x) subject to gi (x) > 0, i 1.,m hj (x) 0, j 1.,p. L-BFGS is a low-memory aproximation of BFGS. Minimization of scalar function of one or more variables. These methods will need the derivatives of the cost function, in the case of the Rosenbrock function, the derivative is provided by Scipy, anyway, here's the simple calculation in Maxima: rosen: (1-x)^2 + 100*(y-x^2)^2 ġ00 ( y − x 2 ) 2 + ( 1 − x ) 2 rosen_d: Ĭonjugate gradient method is similar to a simpler gradient descent but it uses a conjugate vector and in each iteration the vector moves in a direction conjugate to the all previous steps: optimize.minimize(optimize.rosen, x0, method='CG', jac=optimize.rosen_der)īFGS calculates an approximation of the hessian of the objective function in each iteration, for that reason it is a Quasi-Newton method (more on Newton method later): optimize.minimize(optimize.rosen, x0, method='BFGS', jac=optimize.rosen_der)īFGS achieves the optimization on less evaluations of the cost and jacobian function than the Conjugate gradient method, however the calculation of the hessian can be more expensive than the product of matrices and vectors used in the Conjugate gradient. We will see more about gradient-based minimization in the next section. In this case, we haven't achieved the optimization. Message: 'Desired error not necessarily achieved due to precision loss.' Sometimes we can use gradient methods, like BFGS, without knowing the gradient: optimize.minimize(optimize.rosen, x0, method='BFGS') We'll find the minimum in considerably less function evaluations of the different points. Message: 'Optimization terminated successfully.' The examples can be done using other Scipy functions like or _)

In the next examples, the functions _scalar and will be used. In the case we are going to see, we'll try to find the best input arguments to obtain the minimum value of a real function, called in this case, cost function. Mathematical optimization is the selection of the best input in a function to compute the required value. Optimization methods in Scipy numerical-analysis optimization python numpy scipy Modesto Mas Numerical Computing, Python, Julia, Hadoop and more